AI is Powerful. But Are You Actually Using It Right?

The conversation around AI and its impact on society is no longer something you can scroll past. Studies are coming out, debates are picking up, and the questions being asked are getting harder to ignore. What is AI actually doing to how we think? What does overreliance look like in real life? How much of what it tells us can we actually trust? These are not small concerns. If anything, we are not talking about them enough.

In 2025, researchers at MIT's Media Lab published a study that got a lot of people's attention. 54 students were monitored using EEG headsets while writing essays, divided into three groups: one using ChatGPT, one using a search engine, and one working entirely on their own. The students relying on AI showed the weakest neural connectivity, with up to 55% lower cognitive engagement than the unaided group.The researchers called it cognitive debt, this is the idea that when we repeatedly rely on AI, we replace the effortful cognitive processes required for independent thinking, taking out a loan against our own brains. The short term payment is convenient, but the long term cost is a weakening of the neural connections that build critical thinking, creativity, and resilience.

And nowhere does this matter more than in education.

But before we get into that, there is a more honest question worth asking. Is AI the problem? Or is it the way people are using it?

Take your mind back to when ChatGPT arrived. Overnight, it was on every screen, in every group chat, in every classroom. And almost immediately, people started using it for everything. Questions they probably already knew the answer to. Essays they were more than capable of writing themselves. Thinking they could have done on their own, outsourced in thirty seconds. Not out of laziness necessarily, but because the option was right there, it was instant, and it worked.

Bring that into education and something concerning starts to take shape. What happens to critical thinking when the thinking can always be done for you? What happens to the muscle you never have to use? Realistically, if you never exercise that muscle, it becomes weak, dormant, and eventually, when you try to use it again, it cannot be used, it becomes a pain to even think of.

Here is where we land on it. AI without any real intention behind it, without any structure or purpose, can do genuine damage. Not because the technology itself is the villain, but because something this powerful in the hands of everyone, without any guidance on how to actually use it well, is a problem waiting to happen.

However, AI is not just an issue, because if used differently, used in ways that push you to think harder rather than think less, you would find that it has real potential. The idea is not new. It looks a lot like Socratic thinking. Like the teaching methods that have always produced the deepest thinkers. Just with something behind them now that can actually scale.

To get a clearer picture of what this looks like on the ground, we spoke to educators around the world. Candidly. We wanted to have honest conversations about what they are actually seeing, and how AI could be integrated into their classrooms, and what would be willing to have. The results were interesting and not that surprising given the research that has already been conducted on this topic.

Professors, tutors, educators across different countries, different institutions, different subjects. All saying roughly the same thing. Students are using AI to cheat, and the ones who are not cheating outright are still struggling with how to actually use it in a way that helps them learn. The logic is understandable. If it can give you the answer, why go through the process of finding it yourself?

But here is what is happening as a result. Students are getting the answers and losing the understanding. They are moving through coursework without the concepts actually landing. And when it comes to applying what they have supposedly learned, the gaps show up fast. This is what the MIT study was pointing to. Too much of anything can be bad. However, AI is a huge technological development, so what do we do? How do we use it?

Part of the problem is that AI, in its most basic form, was never built to teach. It was built to respond. And there is a significant difference between a tool that responds to your questions and a tool that actually helps you understand something. When AI does not know something, it does not always tell you that. Sometimes it just fills the space with something that sounds right. Confident, fluent, and wrong.

So what do you do with that?

For us, these conversations were not just concerning. They were clarifying. This is because every problem, clearly defined, points toward a solution. And what educators were describing was not a problem with AI itself. It was a problem with how AI was being applied. No focus. No reliable source material. No real thought given to how it communicates with a learner.

Give AI a clear purpose, ground it in accurate sources, and actually think about how it explains things to a person who is still learning, and something very different becomes possible.

That is what we set out to build. And those conversations with educators shaped what it became.

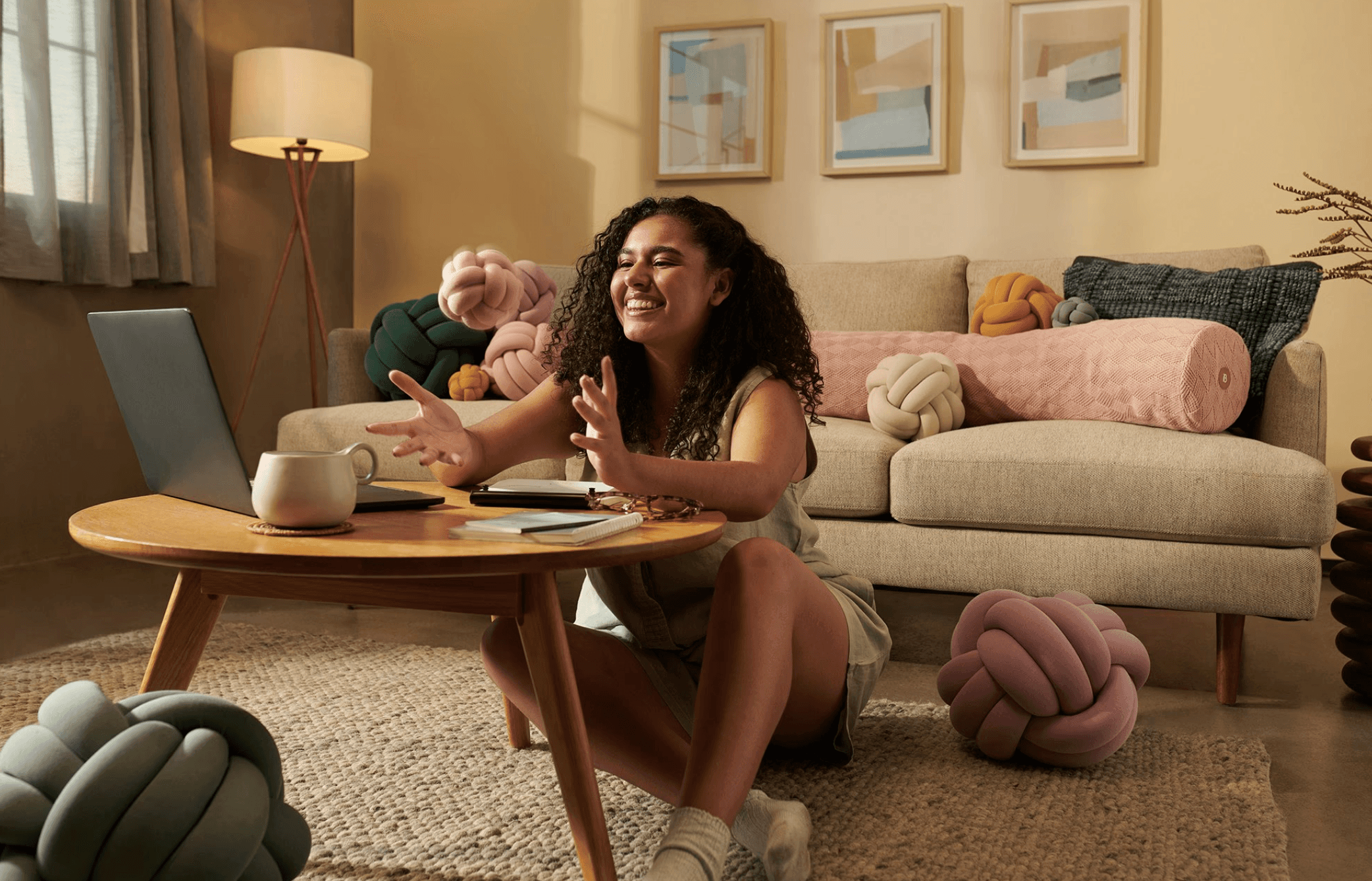

Meet Ayan. She is the AI at the heart of Lume Learn, YourLume's learning companion. Ayan was not designed to just answer questions. She was designed to teach. The way she communicates, the way she breaks ideas down, the way she responds when you are confused, all of it was built with those educator conversations in mind. She applies Socratic principles, pushing you to think rather than just handing you conclusions. She works from verified source material (your verified source material), so what she tells you is grounded, and she does not deviate. She adapts to how you are engaging, not just what you are asking. Now, we are still early, and we are still developing, but it is good to see that with the solutions being found, there is light at the end of the tunnel.

Ayan is what AI in education could look like if we actually took the problem seriously.

(Replace the video below with a video of Ayan working nicely)

Disclaimer: The study cited is still awaiting peer review, and the researchers themselves acknowledge that the sample size is small. But the direction of the findings is hard to ignore, and it reflects something educators have been saying for a while now.